On the 25th of June 2014, our virtualization unit hosted the yearly Technical Update session. The main goals of these sessions are to inform our clients regarding the latest technological developments in the area of datacenter virtualisation. This year, the following subjects were the main focus:

- Software Defined Performance with PernixData FVP

- Software Defined Storage

- Automation by vCloud Automation Center and vCenter Orchestrator

In this blog post, we’ll focus mainly on the second subject, Software Defined Storage(SDS). Earlier, we already discussed emerging trends in the storage landscape. As more and more vendors are now offering a SDS solution, we can conclude that it’s a technology that’s here to stay. But why would you want to use SDS if you already have blazing fast and scalable SAN storage? And if you do, which vendor should you choose for your SDS solution?

The current market for SDS consists of the following solutions from major vendors. While other products may exist or are in development currently, these are the most popular ones:

VMware VSAN

Maxta MxSP

EMC ScaleIO

Nutanix VCP

DataCore SanSymphony

Atlantis ILIO USX

For this Technical Update Session, we focused on testing VMware VSAN, Maxta MxSP, and EMC ScaleIO. In the coming weeks we will blog about our experiences with each product, the test results, and any advantages and disadvantages or each individual product.

In order to properly test these products we needed representative hardware, which we found in the form of a supermicro Virtual SAN Ready Node (http://blogs.vmware.com/vsphere/2014/03/virtual-san-ready-nodes-cisco-dell-fujitsu-ibm-super-micro-next-30-days.html). The specs of this system are as follows:

3 Supermicro X9DRT-PT nodes running vSphere 5.5U1 equipped with the following hardware:

- 2 x Intel Xeon E5-2620v2 (@2.10GHz)

- 64 GB RAM

- 3 x Seagate 1TB SATA drive

- 1 x Intel 3700 100GB SSD

- 1 x Intel 3700 200GB SSD

- 1 x M4 100GB SSD

- 1 x Mellanox MHGH28-XTC

The three nodes are connected to a Xsigo VP780 IO Director over infiniband, which in turn provides 3x10GbE for vMotion, iSCSI and Virtual Machine connectivity.

Maxta MxSP

So, to get started, the first product we tested was Maxta MxSP.

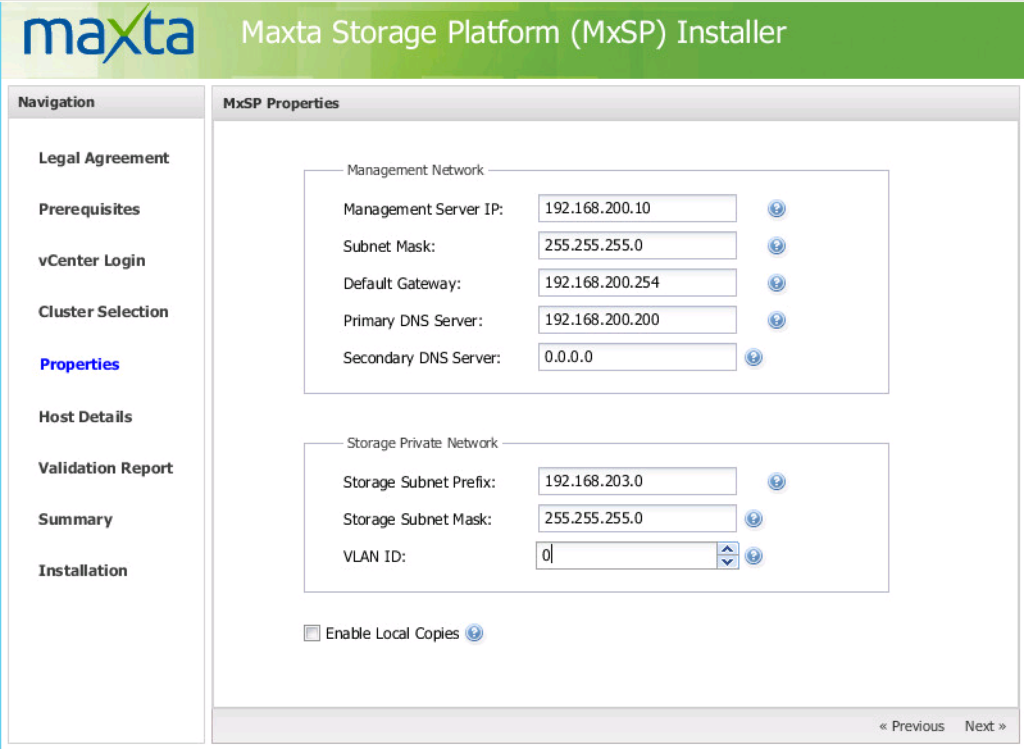

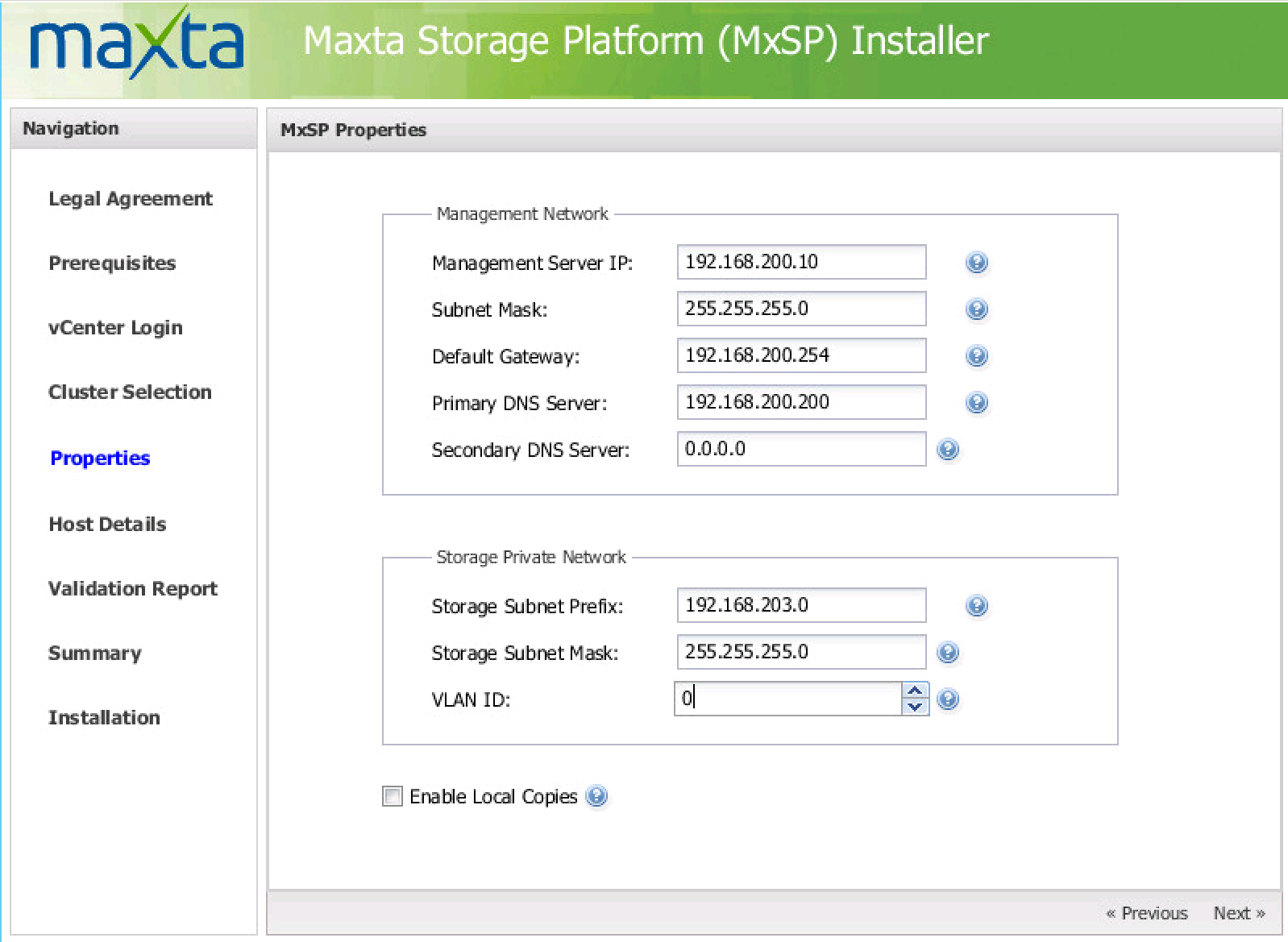

The first thing that comes in mind is that Maxta has everything well documented (including the installation of vCenter). After reading the installation manual, we started the installation by running a command line installer. After 20 seconds, a java-based-windowed-wizard appeared in which a couple of parameters can be entered. At first, vCenter credentials are needed, followed by a vCenter Cluster selection. Then the real configuration part starts:

By entering all network parameters, the complete configuration for MxSP is set. The installation wizard will perform al lot of automated tasks like adding a node to all hosts in the cluster, creating networks, build the shared datastore and adding all drives. It will also automatically create a replication network.

One thing that is a bit tricky, is the “Enable Local Copies” option. As MxSP doesn’t support hardware raid controllers, they want you to add all available disks as a separate entity. The “Enable Local Copies” option, lets you replicate data on disks to other disks in the node. An even number of disks is needed to let this work. The version of MxSP we used, lets you set the checkbox without an even number of disks.

The result was a completed setup that wasn’t able to start.

The next step was to try to break the solution with a couple of moves:

- Remove a normal disk from a Supermicro node

- Remove an SSD from a Supermicro node

- Power off a Supermicro node

The solution is quite resilient. Because when performing any of the tasks above, MxSP simply notifies the administrator on the management tab that there is a problem. When fixing the problem and rebooting the Supermicro node (for some reason, the hot-removable disks weren’t recognized after inserting and a reboot was needed), MxSP started rebuilding and replicating all VM’s running on the datastore. The solution uses a raid-5-ish node array, so during a node fail you will have no unavailability or downtime.

Performance testing was the next step. We used IO Meter to test the maximum IOPS, latency and throughput. A noticeable thing is that when using smaller file sizes (100mb) in IO Meter, the performance is much better then when using bigger file sizes (2gb). In the comparison/performance overview later in this blog, you can find some more regarding this topic.

The MxSP solution is quite good. The only thing is truly lacks is the ability to configure the solution after installing it. To reconfigure, you need to rerun the installation wizard (which sometimes gave some issues). Maxta told us that they are going to add configuration options in the next version.

In the next part of the SDS blogpost, we will focus on VMware VSAN.