While helping a customer to install and extend their repositories to accomodate their GFS backups I came accross a weird error.

What I did was this: I asked for some additional storage luns from the storage department to extend my physical proxies,

who happens to be also a repository, like I said with an extra repository to house the backup of the GFS backups.

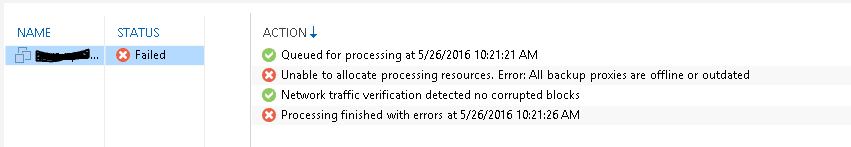

Then all of a sudden, all the backups failed with quite a particular weird status.

Unable to allocate processing resources. Error: All backup proxies are offline or outdated.

The proxies came back as “unavailable” under the Managed servers location in the Backup Infrastructure pane.

While rescanning solved the problem at first hand immediately, as soon as I tried to restart a backup job it failed

again with the same error and it came back as “unavailable” again.

As I was digging through the log files I stumbled upon the same error, but could not quite figure out what was causing the

error. Everything else was working great, except the backups. Hmm this sounds like a severity 1…

With some help from Christos from VEEAM Support we actually traced back the steps.

First the thing when rescanning the proxies/repositories while they were “unavailable”

We followed the log sequence down to the host/repository rescan that I manually executed in order to

refresh the proxies and we found the following:

<07> Error Failed to connect to transport service on host ‘hostname.proxie.FQDN’ (System.Exception)

<07> Error Failed to connect to Veeam Data Mover Service on host ‘hostname.proxie.FQDN’, port ‘6162’

<07> Error Access is denied

The above message doesn’t necessarily mean that something is broken, but a plausible reason for it could be that connection

through port “6162” to the repositories/proxies was not possible due to local restrictions of firewall or Antivirus.

After a reboot, this restriction might be removed and connection to the repositories/proxies can be successful again, thus

the jobs will run normally again. This could also be some local network issue which might get resolved with a reboot

if there is no FW/AV installed on Veeam server.

What actually caused it was the following:

By design, when I added the drive to the windws 2012 r2 Proxy (also repository) server. The new drive letter for the new

repositorie is D:, the default already existing repository points to E:.

So I added the repository, then I thought OOOOPS, the new repository should point to F: instead.

What I did then might be the cause of the incident….

I went back to the proxy server, deleted the drive, and created it again as drive F: (I did it on both proxies)

went back to the Backup server, and removed the 2 newly created repositories and created them again but then pointed to the F: drive.

I thought, by changing the drive letter, the default drive letter also got changed for the existing repositories,

so the backups are actually pointing to a drive / location which is valid, but cannot be found…

Repeated all the steps again a day later, I did the exact same, but then I added the correct drive. And It went 100% correct.

Well, it’s much easier than that to point the backups to a different repository rather than changing disk volume names etc.

If you face this situation in the future, it would be enough to go to backups-> disk and click remove from backups for the backup

set that has been “moved” to a new drive letter. After the above is done, the backups will no longer exist in your

database (make sure not to select “remove from disk” though). Then, by rescanning the repository, Veeam will import the

existing backups to the database from their new location making them available for usage again.

Once they are imported, they can be mapped to their original job by opening backup job settings-> storage-> map backup.

There is also a KB article about this which can help you understand this use case a bit better: https://www.veeam.com/kb1729

With thanks to Christos from VEEAM support for helping and pointing me into the right direction.

Arie-Jan Bodde